CASE STUDY: Optical Target Tracker

Portable Camera-Based Object Tracking: Mastering Simulation-Trained AI at the Edge

Building a portable optical tracking system using digital twin simulation, custom machine learning models, and edge AI deployment.

Camera-Based Aerial Object Tracking

Services

Hardware Engineering, Software Development,AI Development

Project Timeline

12 months

Tech Stack

NVIDIA Omniverse, Custom ML Training Pipeline, Embedded Linux, DJI Gimbal Integration, Camera-Based Tracking, Compact Edge Computing Platform

The Challenge

Drones have become an asymmetric threat across defense, security, and public safety applications. Traditional counter-drone systems rely on radar technology, which provides excellent range but requires significant power and infrastructure. We knew there had to be a better solution — one that could be deployed quickly and with minimal resources.

“We could have waited for a client to fund this exploration, but that would have made us followers, not leaders. By investing our own resources into ambitious R&D, we master technologies before our clients need them—which means we can deliver faster, more innovative solutions when opportunities arise.”

Jonathan Pardeck, President, Systematic Consulting Group

Define & Discovery

Camera-Based Tracking

Camera-based optical tracking offers a compelling alternative to radar-based technology. It dramatically lowers power consumption, offers smaller form factors, and leverages rapidly advancing computer vision capabilities.

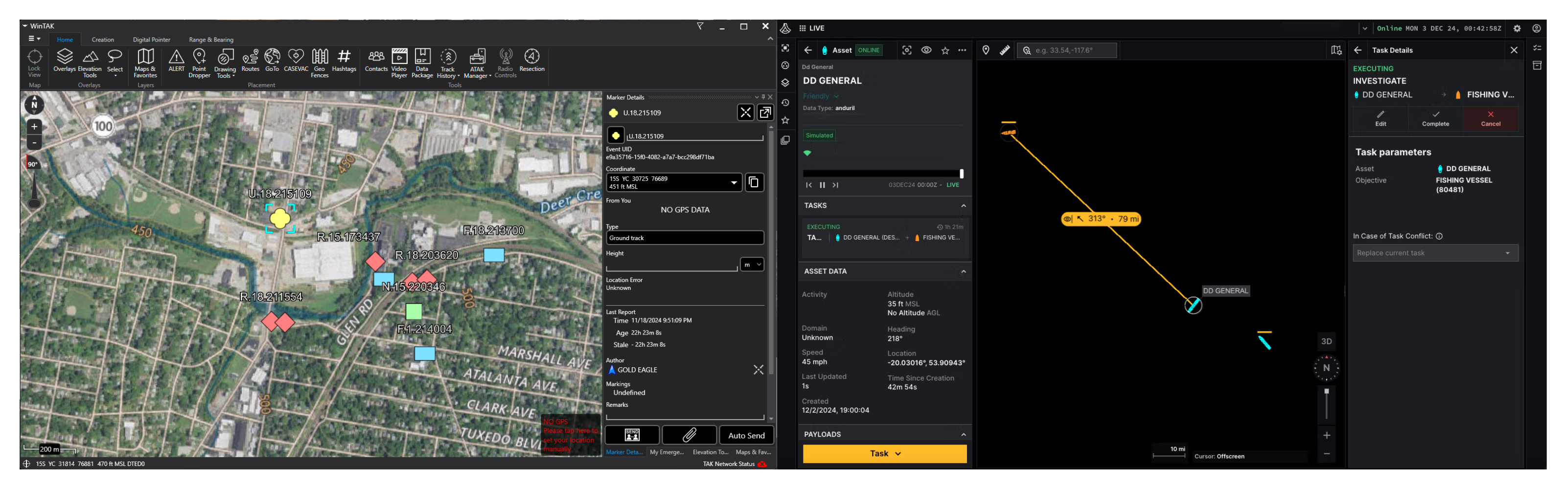

However, camera-based tracking at distance requires solving complex technical challenges: detecting 1-2 meter wide targets at 2+ kilometers, maintaining focus across varying zoom levels while tracking moving objects, deploying AI on resource-constrained hardware consuming less than 20W, and achieving this in a man-portable package under 50 pounds with 24+ hour unattended runtime. The system also needed rapid deployment (<10 minutes setup) and integration with established Command & Control platforms including TAK and Anduril Lattice.

Architect & Design

Simulation-First Development Strategy

Traditional machine learning development for computer vision follows a resource-intensive cycle: capture thousands of real-world images, manually annotate each frame with bounding boxes, train models, test in field conditions, and iterate. For drone tracking specifically, this means coordinating drone flights, camera equipment, various weather conditions, and hours of manual labeling.

We identified an alternative approach: leveraging digital twin simulation to generate synthetic training data. Recent advances in tools like NVIDIA Omniverse have made it possible to create photorealistic environments where virtual drones fly realistic patterns while virtual cameras capture training data with perfect automatic labeling.

Develop & Iterate

From Simulation to Reality

The development phase validated both our simulation approach and revealed critical lessons about real-world implementation.

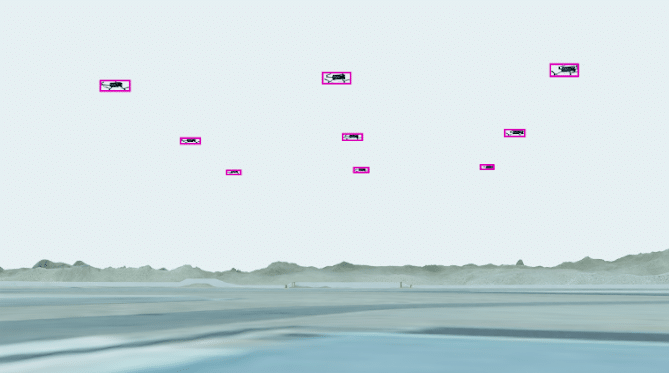

Model Training Success

Our simulation-based training pipeline produced a model that successfully transitioned from virtual environments to real-world drone tracking. This proved the viability of synthetic data for this class of computer vision problems—a finding that’s directly applicable to our client work in sports technology, robotics, and industrial automation.

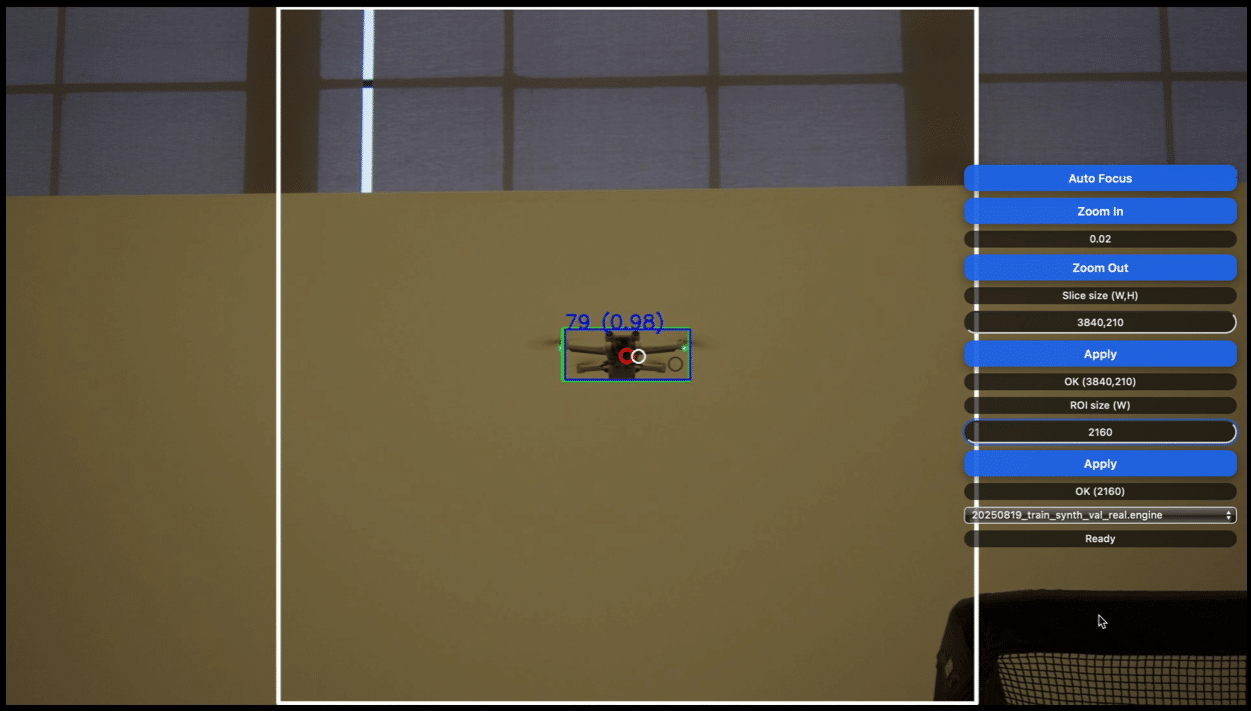

Tracking Performance and Technical Constraints

Field testing validated reliable detection and tracking of DJI M30T (Group 1 UAS) drones at 100-150 meters. While this fell well short of our 2+ kilometer target range, it revealed critical insights about the practical limits of commercial optical components. Three specific COTS hardware limitations impacted extended range performance:

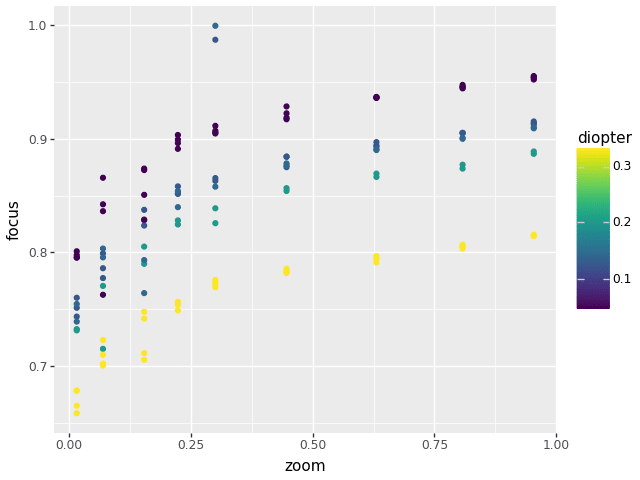

- Autofocus quality and speed – The varifocal lens behavior required custom focus calibration across zoom levels to achieve parfocal-like performance

- Low-frequency joint position updates – 660ms command latency and 2Hz position updates from the gimbal API limited tracking responsiveness during rapid target maneuvers

- Detection model reliability – Unfocused targets at extended distances reduced AI detection confidence, even with our simulation-trained YOLO 11 model

Despite these range limitations, the system successfully achieved its integration objectives—publishing real-time tracks with geospatial coordinates (latitude, longitude, altitude) and thumbnail images to both Anduril Lattice and TAK/ATAK Command & Control platforms.

Edge AI Implementation

We successfully deployed the trained model to run locally on compact embedded hardware, demonstrating that sophisticated AI doesn’t require cloud connectivity or powerful GPUs. This edge-first architecture is increasingly critical for applications requiring low latency, privacy preservation, or operation in network-constrained environments.

Simulation-Trained AI Model Performance

The YOLO 11-based detection model, trained entirely on NVIDIA Replicator synthetic data, exhibited qualitatively strong real-world performance despite never seeing actual drone footage during training. This validated the simulation-first approach for rapid model development—generating comprehensive, automatically-annotated training datasets in days rather than the months typically required for field data collection and manual annotation.

The model architecture supported hot-swappable capabilities, allowing the system to be re-tasked on the fly to track different object types. At SOFWEEK 2025, we demonstrated this flexibility by simultaneously tracking both boats and people in Tampa Bay using the same hardware platform with dynamically loaded detection models.

The Results

The twelve-month project delivered more than a working prototype. It built organizational capabilities that benefit every subsequent project.

Simulation Infrastructure

We now have proven workflows for using digital twins to accelerate machine learning development. This infrastructure has already been deployed for commercial clients in sports technology, where we’re using similar techniques to track objects in complex visual environments.

Optical System Design Knowledge

We now know what’s possible with the physics of long-range camera systems and can prevent expensive mistakes when designing vision systems for clients and accelerate specification of appropriate components for any application.

Rapid Prototyping Methodology

The project validated our ability to move from concept to functional prototype quickly by leveraging simulation, commercial components, and agile development practices.